Your Own

“Room With a View”

The film “A Room With a View” illustrates how a single visual experience can become a catalyst for lasting change. In the film, the “View” is not merely scenery. It is an unfiltered encounter with the world that disrupts routine, expectation and emotional restraint. That moment of seeing differently alters how the characters feel, think and ultimately choose.

The room with a view places a woman in direct contact with beauty, openness and possibility. This visual exposure awakens something internal before it is understood intellectually. It lowers defenses and creates emotional readiness. From that openness, connection becomes possible. The relationship that follows is not driven by logic or social structure but by an emotional response rooted in perception.

What makes this powerful is that the change does not occur through argument or persuasion. It happens through experience. An experience generated through the mind and its amazing abilities recreating these experiences within. The view reshapes perspective first, and behavior follows. A single moment of visual clarity becomes the foundation for a relationship that challenges social norms and can redirect the course of a life.

What makes this powerful is that the change does not occur through argument or persuasion. It happens through experience. An experience generated through the mind and its amazing abilities recreating these experiences within. The view reshapes perspective first, and behavior follows. A single moment of visual clarity becomes the foundation for a relationship that challenges social norms and can redirect the course of a life.

The film suggests that when perception changes, trajectory changes. Seeing the world more clearly enables people to see themselves more honestly. In that sense, the room with a view is not about geography. It is about access to truth. And once that truth is seen, it becomes impossible to return to the confines of a limited outlook.

How the Human Brain Translates Visual Perception

Building on these findings, the need to faithfully reproduce them becomes not just a technical challenge, but a perceptual one. If a single audio and visual experience can alter emotion, decision making and life trajectory, then any distortion of that experience risks altering its meaning. The power of “A Room With a View” lies in how precisely its images, sounds and pacing communicate emotional truth. To preserve that truth, reproduction must be as close as possible to the original presentation.

Film is a carefully constructed sensory experience. Color, contrast, framing and motion are all intentional. Sound-stage dynamics, silence and spatial cues are equally deliberate. Together, they form a unified perceptual language.

When this language is reproduced through reproductive audio and video systems, even subtle deviations can alter its meaning. Crushed shadows erase intimacy. Inaccurate color shifts emotional tone. Poor frequency response or added noise can distort spatial cues and directional hearing. Compressed dynamics diminish tension and release. These are not cosmetic flaws. They can fundamentally reshape perception and change how the story is felt and understood.

erase intimacy. Inaccurate color shifts emotional tone. Poor frequency response or added noise can distort spatial cues and directional hearing. Compressed dynamics diminish tension and release. These are not cosmetic flaws. They can fundamentally reshape perception and change how the story is felt and understood.

There are countless natural and virtual environments that can offer the same wide range of perspectives beyond a hotel room or even a film production. As an example, an observation deck overlooking the Grand Canyon, or sporting event where the room is replaced by a seat in a stadium or even a view of the ocean floor through a scuba mask all provides their own form of immersion. Each can be considered a personal room with a view, defined not by walls but by the experience and the perspective it delivers.

ocean floor through a scuba mask all provides their own form of immersion. Each can be considered a personal room with a view, defined not by walls but by the experience and the perspective it delivers.

As an aviator, some of my best rooms with a view are experienced in real time from the cockpit of the aircraft I am flying. From this vantage point, live images unfold that are often more compelling than anything a hotel room could offer. One example is a view we experienced of multi cell cluster thunderstorms over the southeastern region  of Georgia, where we were instructed to deviate by reducing altitude to fly beneath these convective systems. It was experienced from a room with virtually unlimited viewing and power, offering both perspective awe in equal measure.

of Georgia, where we were instructed to deviate by reducing altitude to fly beneath these convective systems. It was experienced from a room with virtually unlimited viewing and power, offering both perspective awe in equal measure.

Let’s Explore the Science Behind This

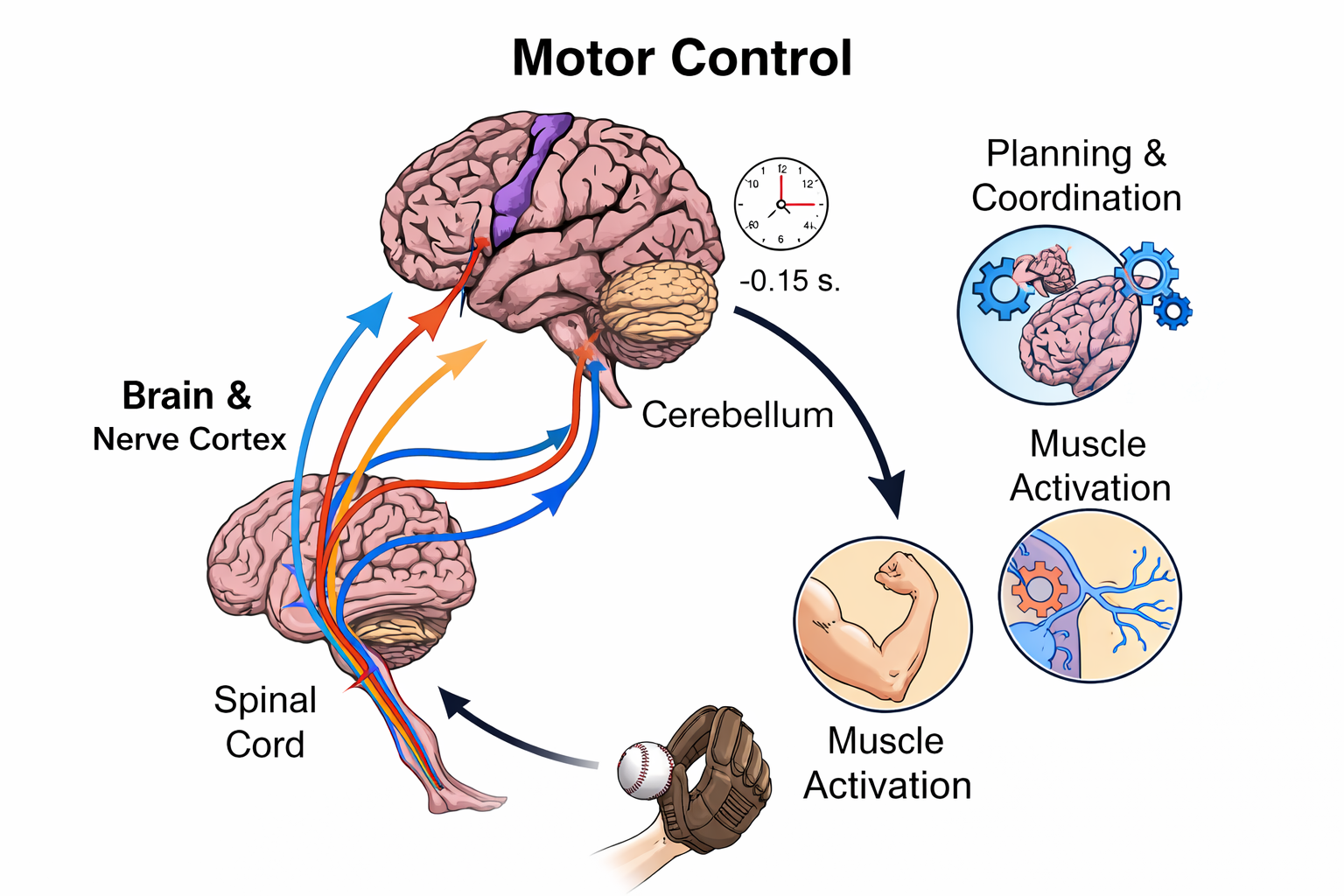

The human brain is a remarkable part of our body’s, operating simultaneously across multiple scales. It manages ultra-fast reflexes  alongside slower, deliberate conscious decisions. From birth, the brain functions as a powerful learning machine, much like an unlimited sponge absorbing data while continuously sampling, comparing, storing and responding to sudden changes encountered by way of visual understanding, auditory reflexes, speech comprehension, temperature, and motor control, to name a few.

alongside slower, deliberate conscious decisions. From birth, the brain functions as a powerful learning machine, much like an unlimited sponge absorbing data while continuously sampling, comparing, storing and responding to sudden changes encountered by way of visual understanding, auditory reflexes, speech comprehension, temperature, and motor control, to name a few.

Visual scene understanding

The brain creates a 3D view by combining multiple visual cues into a single spatial model of the world. Depth is not seen directly. It is computed.

The brain can extract the “gist” of a scene such as indoors vs outdoors or calm vs dangerous in under 100 milliseconds. Full detail comes later, but context is established almost instantly. The visual system can register basic shapes and threats extremely quickly. Studies show the brain can detect an image, such as a face or an animal, in roughly 13 milliseconds even before conscious awareness kicks in.

Binocular vision and stereopsis

Humans have two forward facing eyes separated by a small distance. Each eye sees the world from a slightly different angle. This difference is called binocular disparity. The brain compares the two images and calculates depth. Objects with greater disparity are interpreted as closer while objects with less disparity are farther away. This process is known as stereopsis and is the strongest contributor to true 3D perception at close and medium distances.

Convergence and focus

When you look at a nearby object, your eyes rotate inward. The brain measures this convergence angle and uses it as a distance cue. At the same time the eye’s lens changes shape to focus. While focus plays a smaller role in adults, convergence remains an important depth signal especially at short range.

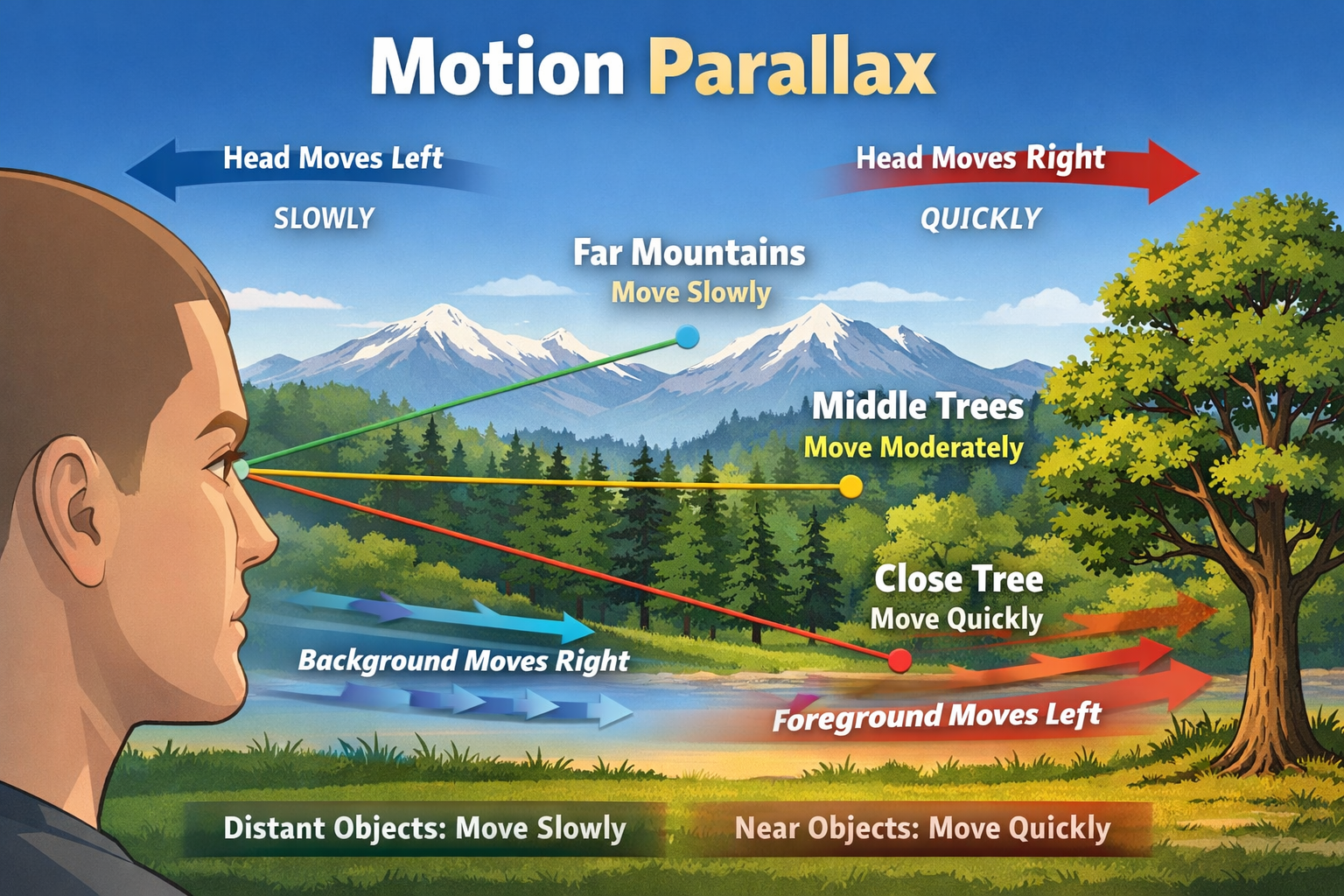

Motion parallax

When you move your head, nearby objects shift position faster than distant ones. The brain uses this relative motion to infer depth even with one eye closed. This is why depth perception still works in monocular viewing and why motion enhances realism in video and VR.

closed. This is why depth perception still works in monocular viewing and why motion enhances realism in video and VR.

Perspective and size relationships

The brain learns how the world behaves. Parallel lines converge in the distance. Objects of known size appear smaller when farther away. Overlapping objects indicate which is closer. These learned rules allow the brain to construct depth from a flat image such as a photograph or a screen.

Lighting and shadows

Shadows reveal shape and distance. The brain assumes light usually comes from above and interprets shading accordingly. Subtle changes in contrast and shadow direction strongly influence perceived depth and realism.

Visual cortex integration

All of these cues are processed in stages across the visual cortex. No single area creates 3D vision. Depth emerges from the integration of disparity, motion, geometry and life experience into a coherent spatial model. The result feels immediate and natural even though it is computationally complex.

In essence, the brain does not see in 3D the way a camera records depth. It infers depth by comparing differences, detecting motion and applying lifetime learned rules. A convincing 3D view is the brain’s best interpretation of the information it receives.

Digital Video Noise

How HDMI Defines When Noise is “Clean Enough”

HDMI does not aim for zero noise. It aims for error free transport after equalization and FEC (Forward Error Correction). The link is considered valid if it achieves:

• BER ≤ 1 × 10⁻¹² before FEC (Forward Error Correction)

• BER effectively zero after FEC

• Stable eye opening at the receiver slicer

This BER target gives us a way to translate noise tolerance.

Practical SNR (Signal to Noise) equivalents for FRL (Fixed Rate Link)

1 part per 1,000 (0.1% noise | ~60 dB SNR)

Yield….Completely unusable

At this level:

• Eye waveform is largely closed

• Any FRL training fails

• Link drops or never establishes

This is far beyond what HDMI can tolerate.

1 part per 10,000 (0.01% noise | ~80 dB SNR)

Yield….Marginal to unstable

At this level:

• FRL may train intermittently

• FEC is overwhelmed

• Dropouts, sparkles or black screens appear

This is where poor transmission lines (cables), weak sources or marginal sinks live.

1 part per 100,000 (0.001% noise | ~100 dB SNR)

Yield…Lower bound of stable FRL operation

At this level:

• Eye waveform is open but margins are thin

• FEC is active but effective

• Link may pass short term tests but fail over temperature or time

This is often what barely compliant 48G links achieve.

1 part per 1,000,000 (0.0001% noise | ~120 dB SNR)

Yield….Robust, reference quality FRL

At this level:

• Large eye waveform opening

• Minimal reliance on FEC

• Stable across length, temperature and real-world conditions

This is where high quality passive copper, hybrid fiber and well-designed active solutions land when truly engineered for 48G.

Why HDMI needs such extreme SNR

At 12 Gbps per lane:

• Unit intervals are ~83 picoseconds

• Jitter tolerance is measured in single digit picoseconds

• Crosstalk, return loss and power noise all stack

Even tiny noise contributions quickly consume eye waveform margins. Unlike audio or video perception, HDMI has a hard cliff. Once SNR falls below threshold, the picture does not degrade gracefully. It fails.

The key distinction

• Audio and video SNR degrade perceptually

• HDMI FRL SNR degrades catastrophically

There is no “slightly noisy HDMI.” There is only working or not working.

Practical takeaway for modern HDMI FRL systems:

• ~1:10,000 is barely stable

• ~1:1,000,000 is where real world reliability lives

• Anything below that risk’s intermittent failure

This is why lab grade testing focuses on eye wave form margins, jitter tolerance and stress testing, not just pass-fail link checks. It also explains why cables that “work on the bench” often can fail in the field.

Factors Influencing Human Brain Interpretation of Reproduced Sound

The brain does not hear frequencies in isolation. It recognizes patterns, relationships and timing. Natural sounds have harmonic structures that follow predictable mathematical relationships. When an audio system adds harmonic distortion, it injects new frequency components that were not part of the original acoustical event. The brain must decide whether these additions belong to the sound or are artifacts.

Total Harmonic Distortion(THD)

Harmonic distortion affects the brain not simply by adding “wrong” frequencies, but by altering the cues the brain relies on to interpret realism, space and intent. The impact is perceptual, cognitive and emotional, not just technical.

Low level harmonic distortion

At very low levels, harmonic distortion can be inaudible or even perceived as benign. Even order harmonics in particular can blend with the original tone and may be interpreted as warmth or richness. The brain accepts these additions because they loosely align with natural harmonic series.

Higher level or complex harmonic distortion

As distortion increases, especially when multiple harmonic orders are present, the brain begins to struggle. These added components do not track the original signal dynamically. They blur fine structure, smear transients and reduce clarity. The brain expends effort separating signal from artifact, which, over time, increases listening fatigue.

These added components do not track the original signal dynamically. They blur fine structure, smear transients and reduce clarity. The brain expends effort separating signal from artifact, which, over time, increases listening fatigue.

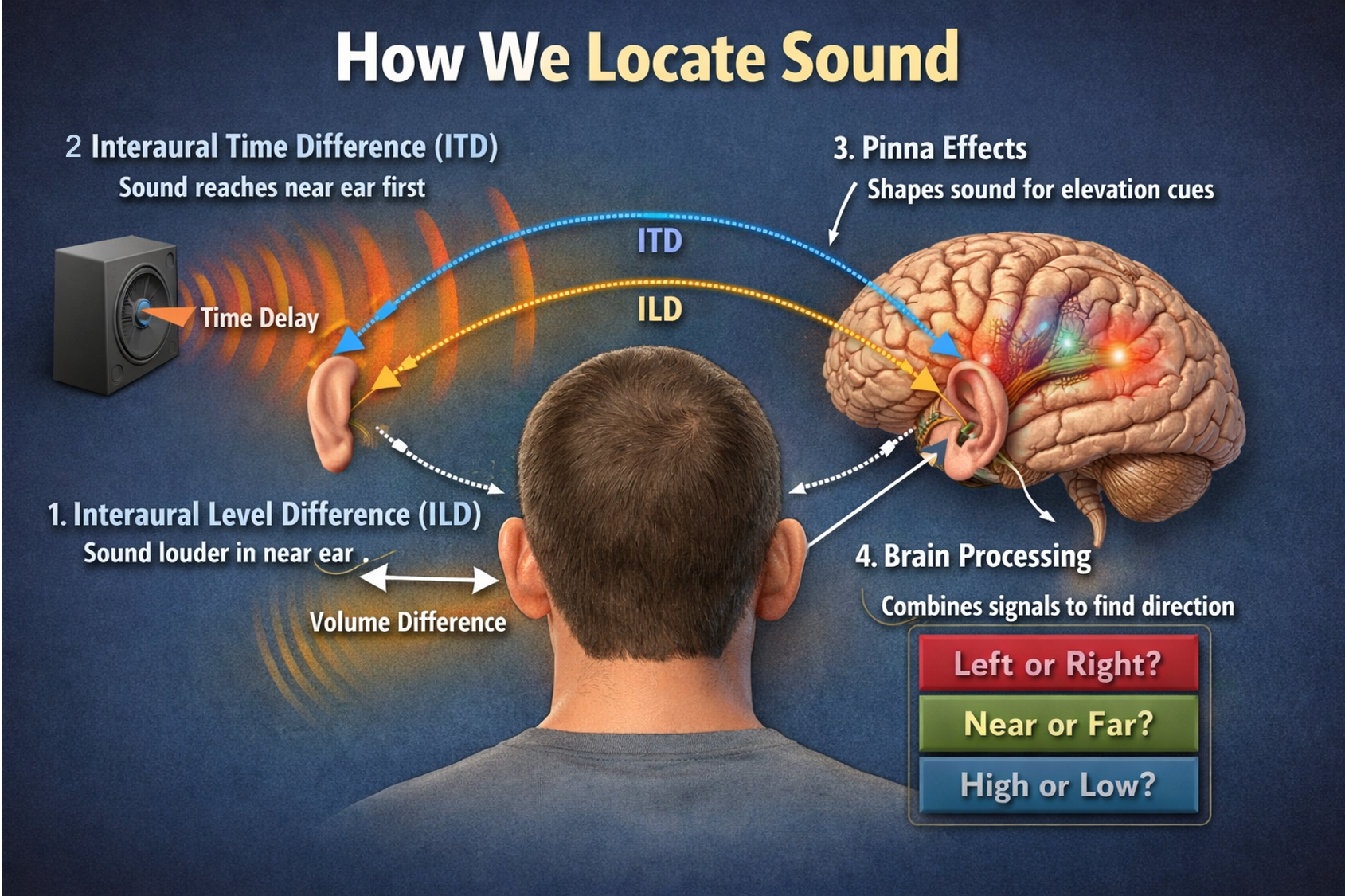

Impact on spatial perception

Spatial hearing depends on precise timing, phase and spectral cues. Harmonic distortion disrupts these cues by introducing energy that is not spatially coherent with the original source. Imaging becomes less stable. Sound stage collapses. Depth cues flatten. The brain can no longer reliably anchor sounds in space.

Masking of low-level detail

Harmonic distortion raises the noise floor locally around tones and transients. This masks subtle information such as room reflections, decay tails and micro dynamics. The brain loses context and realism even if the system sounds loud and detailed.

Temporal effects matter more than numbers

Distortion that varies with level or frequency is particularly damaging. Nonlinear behavior causes harmonics to appear and disappear dynamically, which the brain perceives as roughness or glare. This is more objectionable than steady, low-level distortion because the auditory system is highly sensitive to modulation.

Listening fatigue and cognitive load

When distortion is present, the brain works harder to interpret what it hears. This increased cognitive loads and leads to fatigue over time. The listener may not consciously identify distortion, but will feel discomfort, tension or a desire to turn the system off.

Why transparency matters

A transparent audio system allows the brain to operate in recognition mode rather than correction mode. When harmonic distortion is sufficiently low and well controlled, the brain stops analyzing the playback system and focuses on the performance. Immersion increases and fatigue decreases rather than the brain being more focused on system issues when listening is the primary objective.

In short, harmonic distortion interferes with the brain’s ability to trust what it hears. At low levels it may be tolerated or even perceived as pleasant, but as it rises or becomes complex, it degrades spatial accuracy, masks detail and increases mental effort. The most convincing audio systems minimize distortion so the brain can simply listen rather than compensate.

Intermodulation Distortion (IMD)

Intermodulation distortion affects the human brain more severely than harmonic distortion because it breaks the natural mathematical relationships the auditory system expects. While harmonic distortion adds predictable overtones, intermodulation distortion creates new frequencies that have no natural connection to the original sounds. The brain perceives this as instability, congestion and fatigue.

Why intermodulation distortion is especially damaging

The auditory system evolved to recognize harmonic structures. Musical instruments, voices and environmental sounds all produce harmonics that are integer multiples of a fundamental. Intermodulation distortion generates sum and difference products between unrelated frequencies. These products do not belong to any harmonic series. The brain cannot easily categorize them.

Loss of clarity and separation

When multiple tones are present, intermodulation distortion produces additional tones that move dynamically as the original signals change. This causes:

• Blurring of instrument boundaries

• Reduced separation in complex mixes

• A sense of congestion or “thickness”

The brain struggles to track individual sources because spurious components overlap with real content.

Destruction of low-level detail

Intermodulation products often fall into mid frequency regions where hearing is most sensitive. They mask fine details such as reverberation tails, breath sounds and micro dynamics. Even at low overall distortion levels, IMD can significantly reduce perceived resolution.

Impact on spatial perception

Spatial hearing depends on consistent phase and amplitude relationships across frequencies. Intermodulation distortion injects energy that is not spatially coherent with the original sources. Imaging becomes unstable. Sound stage depth collapses. The sense of space flattens because the brain cannot reconcile the added components with the expected spatial cues.

Increased listening fatigue

Because IMD changes continuously with signal content, the brain is forced into constant error correction. This increases cognitive load. The listener may describe the sound as harsh, busy or tiring even when it is not obviously distorted.

Why IMD is Often More Audible than Harmonic Distortion

A system with very low harmonic distortion can still sound poor if intermodulation distortion is high. The ear is far more sensitive to non-harmonic artifacts than to low level harmonics. This is why IMD is often a better predictor of perceived sound quality than THD alone.

Real world examples that IMD commonly arises from:

• Amplifiers driven near their limits

• Loudspeaker drivers with nonlinear excursion

• Poor crossover design

• Inadequate power supplies

In each case, complex music causes distortion products that were never present in the original recording.

From a Brain’s Perspective

The brain wants coherence. Intermodulation distortion destroys coherence. Instead of reinforcing the structure of sound, it undermines it, forcing the brain to work harder and reducing emotional engagement.

In short, intermodulation distortion degrades a reproductive audio system by injecting non-harmonic, dynamically changing artifacts that confuse the auditory system, reduce clarity, collapse spatial cues and increase listening fatigue. Minimizing IMD is essential for transparency and long-term listening comfort.

Auditory localization

To determine where a sound comes from, the brain compares arrival times between the ears. It can detect timing differences as small as 5 to 10 microseconds. That is faster than most digital audio clocks and is one reason why spatial hearing is so precise.

Acoustic center alignment

Each speaker driver has an acoustic center, the point from which sound effectively radiates. This point is not always at the front of the cone or dome and it differs between tweeters, midrange and woofers. Tweeters often have acoustic centers farther back due to dome geometry and waveguides, while woofers can project forward. Offsetting drivers physically helps align these acoustic centers so their sound arrives at the listener simultaneously.

Time alignment at the listening position

If all drivers are mounted on a flat baffle, higher frequency drivers content often arrive earlier than lower frequency drivers content because of their acoustic center positions and crossover phase shifts. By recessing or advancing certain drivers, designers correct these delays acoustically rather than electronically. When aligned, transients such as drum hits and plucked strings sound sharper and more natural.

Phase coherence through the crossover region

At crossover frequencies, two drivers operate together. If their outputs are not time aligned, phase interference occurs, causing lobing, cancellations or smearing. Physical offset helps maintain phase coherence across the crossover region, preserving smooth frequency response and stable imaging.

Minimizing electronic correction

Some designers prefer physical alignment to avoid heavy DSP (Digital Signal Processing) or complex crossover networks. Mechanical alignment is inherently broadband and does not introduce latency, quantization noise or additional group delay.

Improving stereo imaging

When drivers are time aligned, the wave front behaves more like it comes from a single point source closely resembling the original recorded event. This improves localization, depth and sound stage stability. Imaging becomes less dependent on listener position and more consistent across the listening area.

Why speakers can look unusual

Physically offset drivers can make cabinets deeper or stepped, which is visually distinctive. The visual asymmetry is a byproduct of an acoustic goal, not a stylistic choice.

In short, speakers use different driver depths to ensure that sound from all frequency ranges arrives together, stays in phase through the crossover and preserves the timing information your brain relies on for realism and spatial accuracy.

Speech Comprehension

Spoken language is processed as it arrives. The brain decodes phonemes, words and meaning in real time with no noticeable lag even though this involves complex pattern recognition and prediction happening in parallel.

Your Listening Room

An Active Component for Sound Reproduction.

The acoustical signature of a room is a defining factor in how music is perceived and understood within a listening space. Sound does not arrive at the listener directly from the loudspeakers alone. It interacts continuously with the room itself, reflecting from walls, ceilings and floors, diffusing through furnishings and decaying over time. These interactions shape timing, tonal balance, spatial cues and emotional impact. As a result, the room becomes an active component of the reproduction system rather than a passive container.

Music is created and recorded in spaces that already possess their own acoustical identities. Concert halls, studios and scoring stages impart characteristic reverberation times, early reflections and spatial coherence that are embedded in the recording. A listening room that fails to manage its own acoustical signature can mask or distort those cues. Excessive reverberation can blur transients and reduce clarity. Insufficient reverberation can strip music of scale and warmth. Uneven reflections can collapse imaging or pull instruments away from their intended positions. In each case, the room overrides the acoustic information carried in the recording.

Accurate music reproduction depends on controlling how a room supports direct sound while managing reflected energy. Early reflections influence localization and image stability. Low frequency room modes shape perceived bass weight and pitch accuracy. Decay time affects musical flow, determining whether notes breathe naturally or linger unnaturally. When these elements are balanced, the listener perceives not the room itself but the recorded space, allowing the illusion of presence to emerge.

Importantly, this is not about creating a perfectly dead environment. Music relies on space to feel alive. The goal is coherence, not silence. A well-designed listening room preserves the timing relationships and spectral balance of the original performance while adding minimal coloration of its own.

The room’s acoustical signature should complement the recording rather than compete with it.

In this sense, the listening room functions as the final instrument in the playback chain. Loudspeakers and electronics deliver the signal, but the room determines how that signal is resolved by the human auditory system. When the acoustical signature is carefully considered, music gains depth, realism and emotional credibility. The listener no longer hears sound reproduced in a room. Instead, the room disappears, and the music takes its place.

The frequency signature of a room describes how that space amplifies, attenuates and reshapes sound across the audible spectrum. Unlike electronic components, a room does not behave uniformly with frequency. Its influence is strongly frequency dependent and tied directly to physical dimensions, surface materials and geometry. This uneven interaction is one of the primary reasons two identical playback systems can sound dramatically different in different rooms.

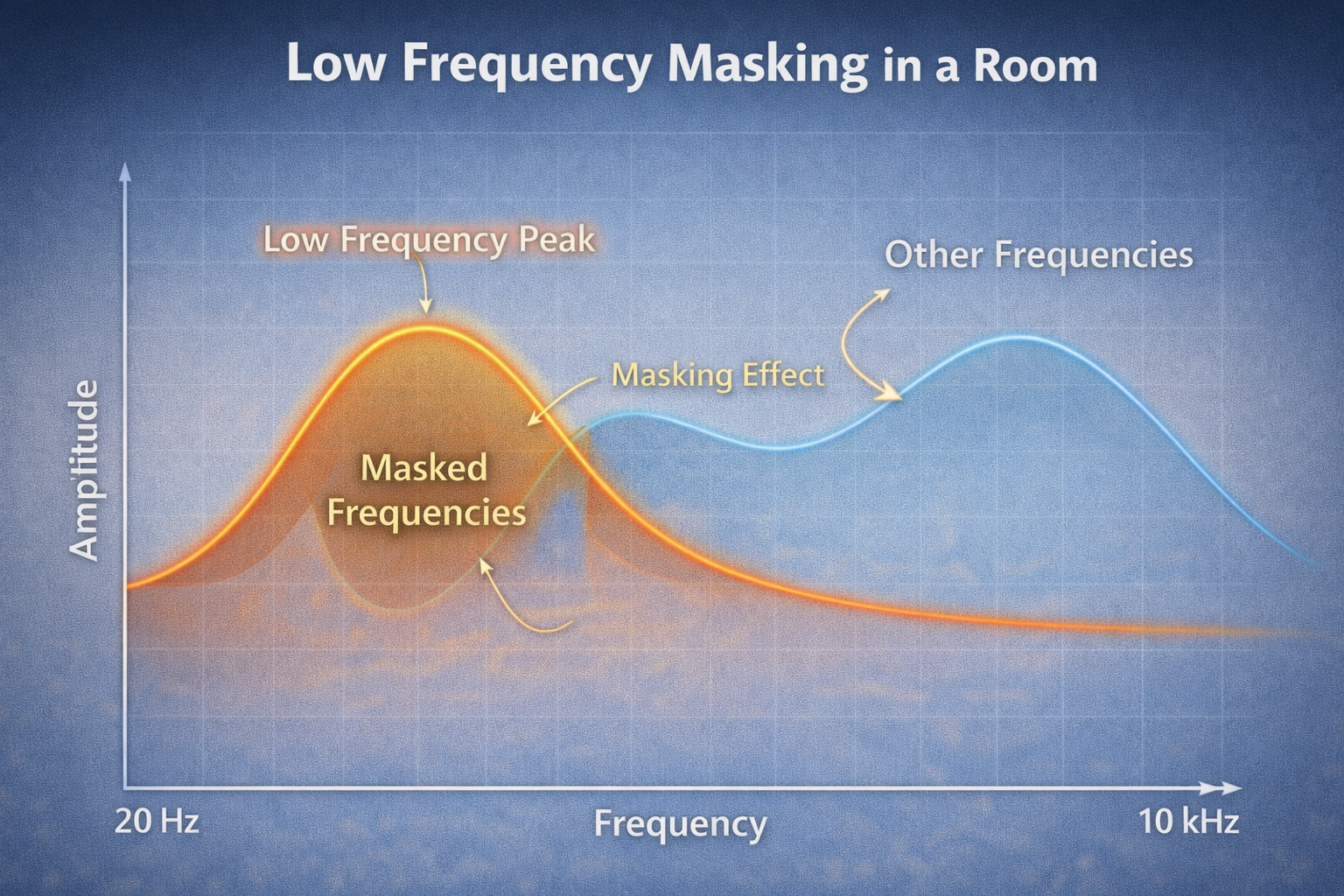

The most dominant effects occur in the low frequency region, typically below about 300 Hz. In this range, sound wavelengths are long relative to room dimensions, causing standing waves or room modes. These modes create peaks and nulls in the frequency response at specific locations. A bass note may be exaggerated in one spot and nearly disappear in another. Because many musical fundamentals and rhythmic anchors reside in this region, these variations can obscure pitch definition and timing. When low frequencies are uneven, they can mask higher-frequency detail depicted in this illustration. This can be corrected by altering the room acoustics mechanically or by introducing some active equalization to correct these peaks and valley’s.

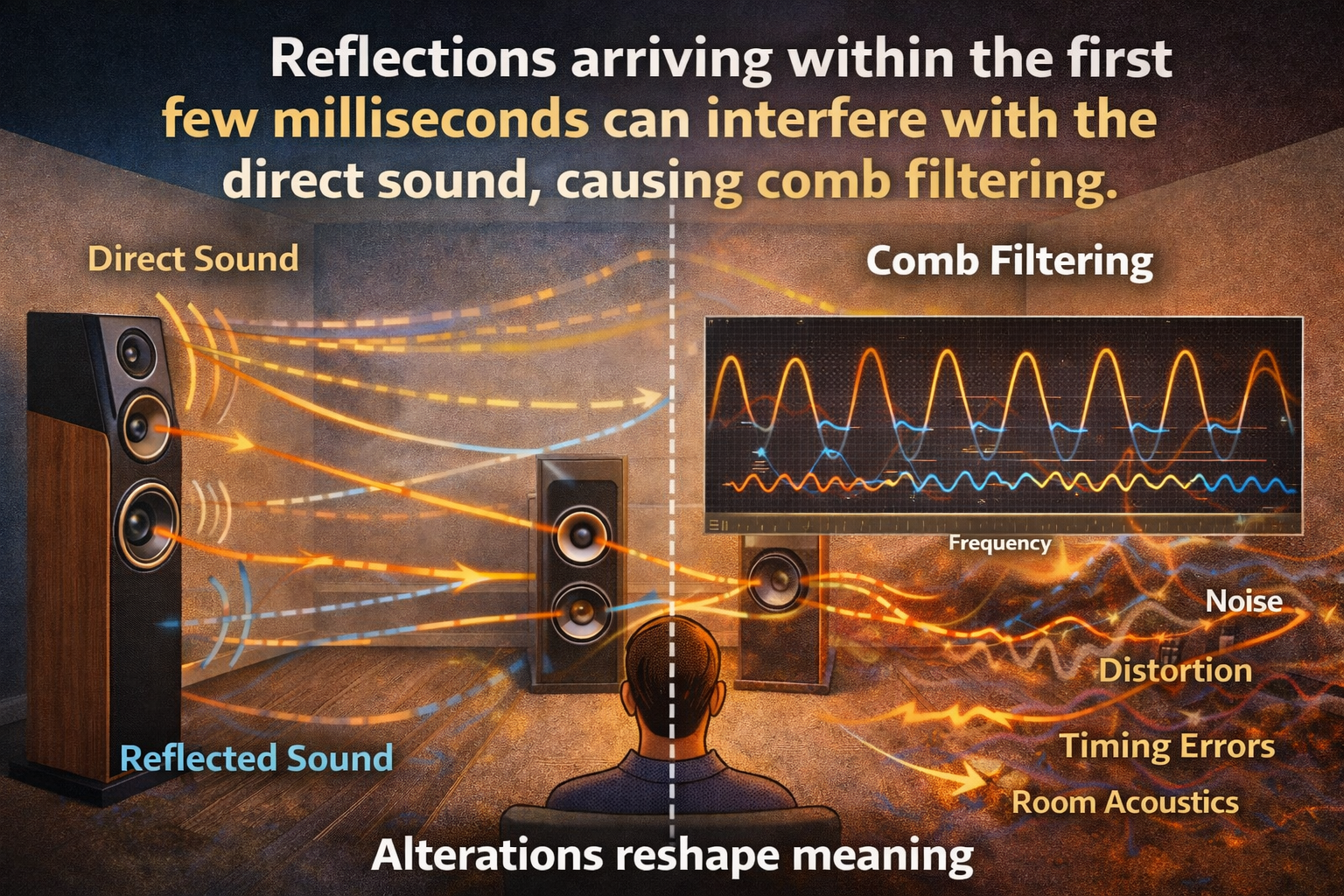

The midrange, roughly 300 Hz to 2 kHz, is where the ear is most sensitive and where much of the harmonic structure of voices and instruments resides. Room effects here are less about standing waves and more about early reflections. Reflections arriving within the first few milliseconds can interfere with the direct sound, causing comb filtering. This produces a series of frequency cancellations and reinforcements that alter timbre. Instruments can lose natural body, vocals may sound hollow or nasal and subtle harmonic cues that distinguish one instrument from another can be smeared or flattened. Because the brain relies heavily on midrange information for identification, even small distortions in this region can significantly alter musical perception.

At higher frequencies, above roughly 2 kHz, the room’s influence shifts again. Wavelengths are short, and sound becomes more directional. Here, surface materials and diffusion play a larger role than room dimensions. Excessive absorption can make the sound dull and lifeless, while overly reflective surfaces can introduce harshness or glare. Although high frequencies carry less energy, they are critical for spatial cues, articulation and the sense of air around instruments. When these frequencies are poorly managed, imaging collapses and instruments can blend together rather than occupying distinct locations.

Masking occurs when the room reinforces certain frequency bands disproportionately. A strong low-frequency resonance can obscure midrange detail, making bass-heavy instruments dominate the presentation and pushing vocals or strings into the background. Similarly, uneven midrange reflections can blur harmonic content, causing instruments with overlapping spectra to lose separation. The result is not simply a tonal imbalance but a loss of intelligibility and emotional nuance.

Ultimately, the frequency signature of a room shapes how the brain organizes sound into meaningful objects. When that signature is uncontrolled, the room imposes its own hierarchy with the reproduced audio, emphasizing some elements while concealing others. Proper acoustic treatment aims to smooth low-frequency response, control early reflections and preserve high-frequency spatial information. When these factors are balanced, the room stops competing with the recording, allowing each instrument to be heard with clarity, scale and intent.

Audio Noise

Audio perception follows a similar pattern to vision, but the ear is even more sensitive to noise during quiet passages and pauses. Expressing this in parts per N maps cleanly to how objectionable noise becomes in real listening conditions.

Below is a practical and perceptually grounded scale for audio signal to noise ratio, assuming broadband noise referenced to nominal signal level.

1 part per 1,000 (0.1% noise | ~60 dB SNR)

Yield....Clearly audible and objectionable

At this level:

• Hiss or noise is obvious during quiet passages

• Low level detail is masked

• Sound stage collapses and spatial cues blur

This is unacceptable for high fidelity audio and is immediately noticeable to most listeners.

1 part per 10,000 (0.01% noise | ~80 dB SNR)

Audible to many listeners, borderline acceptable

Yield….At this level:

• Noise is audible in pauses and fades

• Dynamic contrast is reduced

• Trained listeners notice it quickly, casual listeners may tolerate it

This roughly corresponds to good consumer analog gear and early digital systems.

1 part per 100,000 (0.001% noise | ~100 dB SNR)

Generally inaudible in normal listening

Yield….At this level:

• Noise disappears beneath the auditory masking threshold

• Spatial imaging and microdynamics are preserved

• Silence sounds silent

This aligns with modern high-quality DACs, amplifiers and professional audio targets.

1 part per 1,000,000 (0.0001% noise | ~120 dB SNR)

Well below human hearing limits

Yield….At this level:

• Noise is completely inaudible

• Limited by room noise and hearing physiology

• No meaningful perceptual improvement over 100,000 in real environments

This approaches the theoretical limits of digital audio and exceeds practical listening needs.

Why these thresholds matter

Human hearing is especially sensitive to:

• Noise during silence

• Low frequency hum and high frequency hiss

• Temporal modulation of noise

Once noise rises above roughly 1 part per 10,000, the illusion of silence breaks and immersion suffers, even if resolution and bandwidth remain high.

Practical takeaway

For transparent audio reproduction:

• >1:100,000 is perceptually clean

• ~1:10,000 is the edge of acceptability

• ≤1:1,000 is clearly objectionable

This is why reference audio design prioritizes noise floor and dynamic range before chasing higher sample rates or extended bandwidth.

Observed Impact and Implications

The core message of this article is that perception shapes reality and faithful reproduction matters. A single, well preserved sensory experience can change emotion, judgment and life direction because the human brain rapidly constructs meaning from subtle visual and auditory cues. When those cues are altered, whether by visual distortion, audio noise or timing errors, the experience itself changes and so does its emotional and cognitive impact.